- Submit data

- What do we do with your data

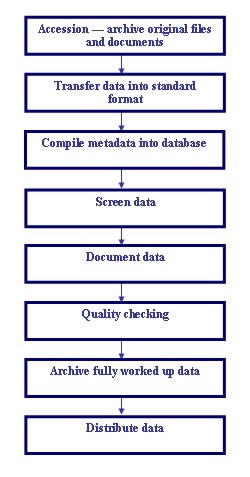

- Data processing steps

- Moored instrument data processing

Moored instrument data processing

Moored instrument data go through several steps before they are incorporated in the National Oceanographic Database (NODB). Our aim is to ensure the data are of a consistent standard and to guarantee their long term security and utilisation.

1. Archive original data. When the data are first received they go through our Accession procedure. The data are securely archived in their original form along with any associated documentation.

2. Transfer data into standard format. Data arrive in various formats and are transferred to our standard format. This binary format is netCDF. NetCDF has the advantage of being able to handle multi-dimensional data from instruments such as moored Acoustic Doppler Current Profilers (ADCPs). It is also platform independent. MATLAB software, including the netCDF toolbox, is used for the transfer.

Eight-byte parameter codes are assigned to each data channel and data are converted to standard BODC units. The 'absent' value for the parameter is inserted where data values are missing.

3. Compile metadata. The metadata (data about data), e.g. collection date and times, mooring position, instrument type, instrument depth and sea floor depth, are loaded into Oracle database tables. These are carefully checked for errors and consistency with the data. The data originators will be contacted if any problems cannot be clearly resolved.

4. Screen data using BODC's in-house visualisation software. This software can be used to

- show a map of mooring positions

- plot time series for

- current meter data

- water level recorder data

- transmissometers

- nutrient analysers

- pressure recorders

- plot the current meter data as scatter plots

- plot profiles for ADCP (Acoustic Doppler Current Profiler) and thermistor chain data

Parameters can be plotted concurrently and records from different instruments compared. Data values are NOT changed or removed, but may be flagged if they appear suspect. Data values that the originator regards as suspect are flagged with 'L'. BODC uses an 'M' to flag suspect data. An 'N' flag is used to indicate absent data.

5. Document all data sets to ensure they can be used in the future without ambiguity or uncertainty. Comprehensive documentation is compiled using information supplied by the data originator and any information gained during BODC screening. It will include

- Data collection, analysis and instrument details

- The context of the data

- BODC processing and quality control

6. Quality checking of data before loading into the database. The netCDF files and metadata are thoroughly checked using MATLAB software to ensure they conform to stringent BODC standards.

7. Archiving the final data set is performed by the BODC Database Manager.

8. Data distribution and delivery. Some data are available by request, with other data available via our web site.